Virtualization, Containerisation, Docker

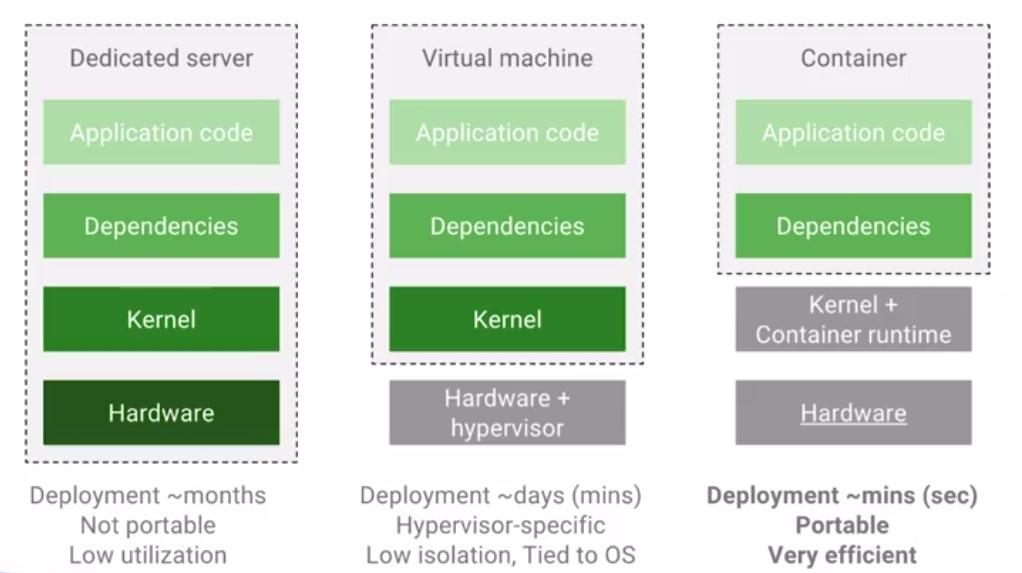

Back then when we used to build an application on the Dedicated server, we required hardware, in addition to the hardware we also had to install the operating system then. After taking care of these requirements only, we could run an application. The hardware was eventually abstracted by the virtual machine.

Virtualization is the technique used for sharing a single hardware system with multiple operating systems. Virtualization is mainly used for maximum utilization of hardware by deploying the application into VM’s which has its own operating system.

A container image is a lightweight, executable package that encapsulates everything that is required to run an application. It includes code, runtime, system tools, system libraries, and settings.

Containerisation is actually the solution to the problem of how to run the application efficiently when it is moved from one environment to another. Let’s say the developer has developed the code in his own machine in Java 7 and it’s working fine, but when it’s moved to production/testing environment then it will not work because production/testing server is configured with Java 8.

Docker is used to creating, deploy and manage virtualized application containers on a common operating system (OS). You can find more details on docker on this blog here: Docker.

Containers are good whether it is a docker container or a Linux container and they are scalable as well. But scaling a container is a difficult task. Even in the cases when the container is already scaled up then the management of the container gets difficult. Autoscaling is missing here, and thus we need to take the aid of Kubernetes.

Kubernetes

Kubernetes is originally designed by Google and donated to the Cloud Native Computing Foundation(CNCF), written in Go language. It is a content management tool, which manages container deployment, container auto-scaling, and container load balancing.

Difference between Docker and Kubernetes: Docker is a container hosting and container runtime platform while Kubernetes is a container orchestration platform. In other terms, containers run on Docker while they can be managed by Kubernetes.

Kubernetes Architecture

The master node is in touch with a number of individual Nodes, each running containers and each talking or communicating with the master using a piece of software known as a Kubelet. Each of the VMs in the cluster is known as a node instance. Each node instance has its own Kubelet talking to the master and atop, which runs a pod. A Pod is the atomic unit of Kubernetes cluster orchestration. In each pod, there can be one or multiple containers. So in short, the master talks to node instances, which in turn contain pods and those pods contain the containers.

Kubernetes on Google Cloud

Google Kubernetes Engine (GKE) provides an environment that is managed one, for deploying, managing, and scaling containerized applications using Google infrastructure. The environment which GKE provides consists of Google Compute Engine instances grouped together to form a cluster. Kubernetes Engine clusters are fueled by the Kubernetes open source cluster management system. The mechanisms through which you interact with your container cluster is provided by Kubernetes. You can use Kubernetes commands and resources to deploy and manage your applications, perform administration tasks and set policies, and monitor the health of your deployed workloads.

Creating a Kubernetes cluster and deploying a container

- Use gcloud container clusters create command to create cluster running the GKE. We can create different types of clusters like Zonal, Regional, Private and Alpha cluster.

- For creating a zonal cluster, use the command :

-

gcloud container clusters create [Cluster_Name] [--zone [Compute_Zone]]

- A regional cluster can be created, use the command

gcloud container clusters create [Cluster_Name] --region [Region] [--node-locations [Compute_Zone,[Compute_Zone]...]

- You can use gcloud container clusters describe [Cluster_Name] to view a cluster and use gcloud compute instances list command to see the list of clusters and VM instances that you have running.

Use the kubectl command-line equivalent for working with Kubernetes clusters. - Use the kubectl command-line equivalent for working with Kubernetes clusters.

- We can deploy a containerized application to the created cluster. Create a new Deployment from the container image by running kubectl run command:

kubectl run [Deployment_Name] --image=[Image] --port [Port_Number] --image specifies a container image to deploy. --port specifies the port that the container exposes.

- Container deployed to your cluster means you have created a pod to your cluster.

- You can use the kubectl get pods command to see the status of the pods that you have running. If there are zero out of one ( 0/1 ) pods running then the current status is Container Creating. If it’s not ready yet. You can run the command another couple of times to see how the status is updated. At some point, you will see the status as running and it will also show as one out of one ( 1/1 ).

- Pod default configuration is only visible to the machine within the cluster. For exposing the pod use the below command:

kubectl expose pod [Pod_Name] --name=[Service_Name] --type=LoadBalancer

- Use the kubectl describe services command, followed by the service name, to see the information about your service. From the output use LoadBalancer ingress IP address to view the site running on the Kubernetes cluster.

Here is some more information on Kubernetes Engine.

Here is some more information on Kubernetes Engine.