Tudip

Virtual Private Cloud Network or simply network is a virtual version of a physical network. In Google Cloud Networking, networks provide data connections into and out of cloud resources – mostly Compute Engine instances. Securing the Networks is critical to securing the data and controlling access to the resources.

Google Cloud Networking achieves flexible and logical isolation of unrelated resources through its different levels.

Levels of Google Cloud Networking

-

Projects

Projects are known to be the outermost container and are used to group resources that share the same trust boundaries. A lot of developers map Projects to teams since every Project has its own access policy (IAM) and member list. Projects serve as a collector of billing and quota details reflecting resource consumption as well. Projects comprise of Networks which contain Subnetworks, Firewall rules, and Routes.

-

Networks

Networks directly connect our resources to each other and to the outside world. Networks which use Firewalls house the access policies for incoming and outgoing connections as well. Networks could be Global – which offers horizontal scalability across multiple Regions or Regional – which low-latency within a single Region. Virtual Private Cloud networks consist of one or more IP range partitions called subnetworks or subnets. Each subnet or subnetwork is associated with a region. VPC networks do not have any IP ranges associated with them. IP ranges are defined for the subnetworks. A network must have at least one subnet then only we can use it.

-

Subnetworks

Subnetworks allow us to group related resources (Compute Engine instances) into RFC1918 private address spaces. Subnetworks are regional resources. Each subnetwork defines a range of IP addresses.

A Subnetwork can be in two modes:

- Auto mode network

- Custom mode network

Auto mode network:

An auto mode network has one subnet per region, each with a predetermined IP range which fit within the 10.128.0.0/9 CIDR block. These subnets are created automatically when the auto mode network has created, and each subnet has the same name as the overall network. When any new GCP regions become available, new subnets for those regions are automatically added to the auto mode networks using an IP range from that block. We can add more subnets manually to auto mode networks in addition to the automatically created subnets.

Custom mode network:

A custom mode network has no subnets at creation giving us full control over subnet creation. In order to create an instance in a custom mode network, we must first create a subnetwork in that region and specify its IP range. A custom mode network has the possibilities of having zero, one, or many subnets per region.

It is possible to switch a network from auto mode to custom mode. But this conversion is one-way, custom mode networks cannot be changed to auto mode networks.

When a new project is created, a default network configuration provides each region with an auto mode network with pre-populated firewall rules.

We can create up to four additional networks in a project. Additional networks can be either auto subnet networks or custom subnet networks.

Each instance which is created within a subnetwork gets assigned to an IPv4 address from that subnetwork range.

Note: Since the default Network allows relatively open access, it is a recommended best practice that you delete it. The default Network cannot be deleted unless another Network is present. Please make sure you delete all the firewall rules for the associated VPC before deleting the VPC network.

Firewalls

Each network has a default firewall rule that blocks all inbound traffic to instances (default-deny-all-ingress). To allow traffic to an instance, we must create “allow” rules for the firewall. Also, the default firewall allows traffic from instances unless we configure it to block outbound connections using an “egress” firewall configuration (default-allow-all-egress). Therefore, by default we can create “allow” rules for traffic we wish to pass ingress, and “deny” rules for traffic which we wish to restrict egress. We can also create a default-deny policy for egress and prohibit external connections entirely.

In general, it is recommended to set the least permissive firewall rule that will support the kind of traffic which we are trying to pass. For example, if we want to allow traffic to reach some instances, but restrict traffic from reaching others, create rules that allow traffic to the intended instances only. This configuration which is more restrictive happens to be more predictable than a large firewall rule that allows traffic to all of the instances. If we want to have “deny” rules to override certain “allow” rules, we can set priority levels on each rule and the rule with the lowest numbered priority will be evaluated first. It is not recommended to create large and complex sets of override rules as it can lead to allowing or blocking traffic that is not intended.

The default network has automatically created firewall rules. No manually created network has firewall rules which are automatically created. For all networks except the default network, we must create any firewall rules we need.

The ingress firewall rules which are automatically created for the default network are:

- default-allow-internal: Allows network connections of any protocol and port between instances on the network.

- default-allow-ssh: Allows SSH connections from any source to any instance on the network over TCP port 22.

- default-allow-rdp: Allows RDP connections from any source to any instance on the network over TCP port 3389.

- default-allow-icmp: Allows ICMP traffic from any source to any instance on the network.

We cannot SSH into an instance without allowing SSH firewall rule.

Each and every rule of firewall has a Priority value from 0-65535 which is inclusive. Relative priority values are used to determine the precedence of conflicting rules. Lower priority value implies higher precedence. When not specified, 1000 is used as a priority value. If a packet is matching with the conflicting rules with the same priority, the deny policy takes precedence.

We can review the default firewall rules using the console through this path: Navigation menu > VPC networks > Firewall rules.

GCP Firewalls are stateful: for each initiated connection tracked by allowing rules in one direction, the return traffic is automatically allowed, regardless of any rules.

Firewall Rules and IAM

The privilege of creating, modifying, and deleting firewall rules has been reserved for the compute.securityAdmin role by IAM. Users assigned to the compute.networkAdmin roles are able to safely view and list firewall rules that might apply to their projects.

Allow/Ingress Rules

Allow and ingress are values which we can set for the flags —action and –direction while creating the firewall rules. –action can have 2 values. ALLOW and DENY. ALLOW was the only action supported previously. DENY rules are generally simpler to understand and apply.

–direction can have 2 values. INGRESS and EGRESS. INGRESS refers to inbound and EGRESS refers to outbound connections.

With INGRESS, EGRESS, ALLOW, DENY, and PRIORITY we have maximum control and flexibility for determining connections in and out of our networks.

Network route

All networks have routes created automatically to the Internet (default route) and to the IP ranges in the network. The route names are automatically generated and will look different for each project.

We can review the default routes using the console through this path: Navigation menu > VPC network > Routes.

Creating a custom network

When manually assigning subnetwork ranges, we first create a custom subnet network, then create the subnetworks that we want within a region. When we create a new subnetwork, its name must be unique in that project for that region, even across networks. The same name can be repeated in a project as long as each one is in a different region.

We can create a custom network with the console or with the cloud shell. In the console, we have UI so it is easy to create the network. So here I am showing only the cloud shell part.

The following command will create a custom mode network named custom-network.

gcloud compute networks create custom-network --subnet-mode custom

The command given below will create a subnet named subnet-us-central for the custom mode network which we have created now.

gcloud compute networks subnets create subnet-us-central \ --network custom-network \ --region us-central1 \ --range 10.0.0.0/16

Adding firewall rules

To allow access to VM instances, we must apply firewall rules. We can use the instance tag to apply firewall rule to the VM instances. The firewall rule will apply to any VM using the same instance tag.

Instance Tags are used by networks and firewalls to apply certain firewall rules to tagged VM instances. For example, if there are several instances that perform the same task, such as serving a large website, we can tag these instances with a shared word or term and then use that tag to allow HTTP access to those instances with a firewall rule. Tags are also reflected in the metadata server, so we can use them for applications running on our instances.

Firewall rules can be created using either a console or cloud shell. Here we will create using cloud shell.

The following command will create a firewall rule to allow HTTP internet requests.

gcloud compute firewall-rules create allow-http \ --allow tcp:80 --network custom-network \ --source-ranges 0.0.0.0/0 \ --target-tags http

The above command will create a firewall-rule which will allow HTTP internet requests to those instances which have the tag http because we are using the flag –target-tags.

Here is an example command to create an instance which has tags.

gcloud compute instances create us-test-01 \ --subnet subnet-us-central \ --zone us-central1-a \ --tags http

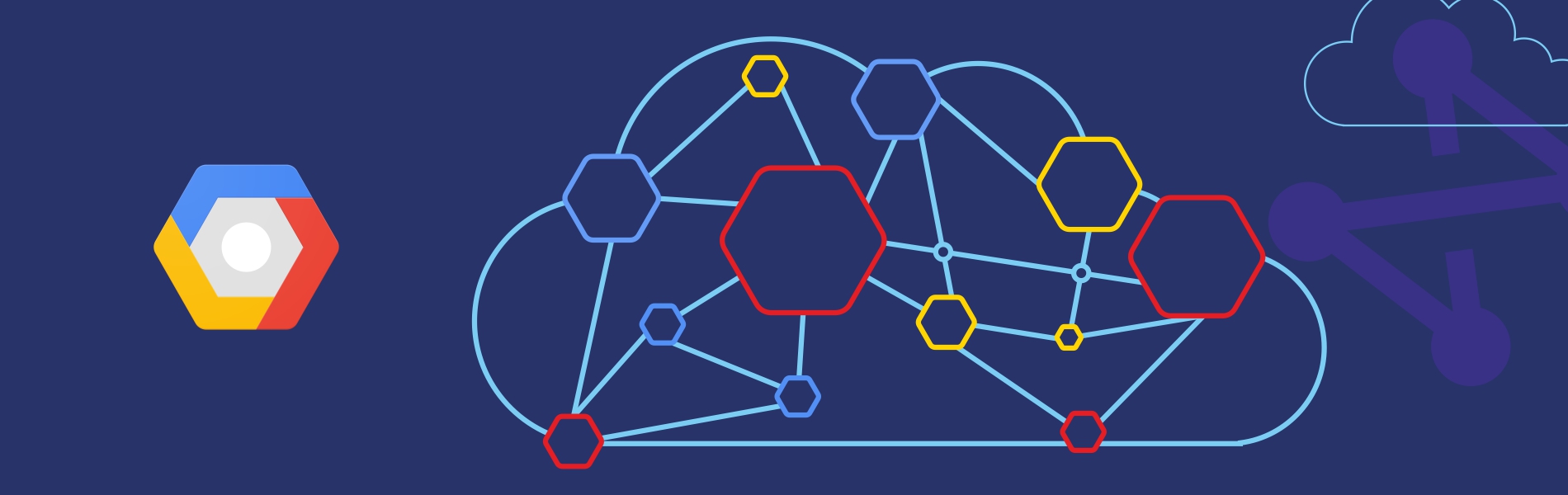

VPC Network Example:

In the above example, subnet1 is defined as 10.240.0.0/24 in us-west1 region. There are 2 VM instances in the us-west1-a zone in this subnet and their IP addresses are from the available range of addresses in subnet1.

Subnet2 is defined as 192.168.1.0/24 in the us-east1 region. There are 2 VM instances in the us-east1-a zone in this subnet and their IP addresses are from the available range of addresses in subnet1.

Subnet3 (mistakenly mentioned as subnet1 in the diagram) is defined as 10.2.0.0/16, in the us-east1 region. There is one VM instance in the us-east1-a zone and the second instance in us-east1-b zone in subnet3, each receiving an IP address from its available range. Because subnets are regional resources, instances can have their network interfaces associated with any subnet in the same region that contains their zones.

Read more about What is Virtual Data Center in Google Cloud Platform (GCP)?